The High-Governance of No-Framework

Background

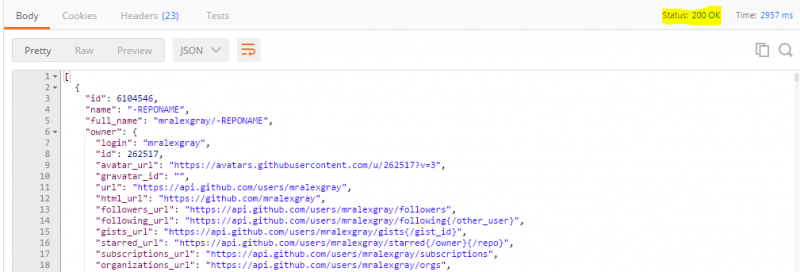

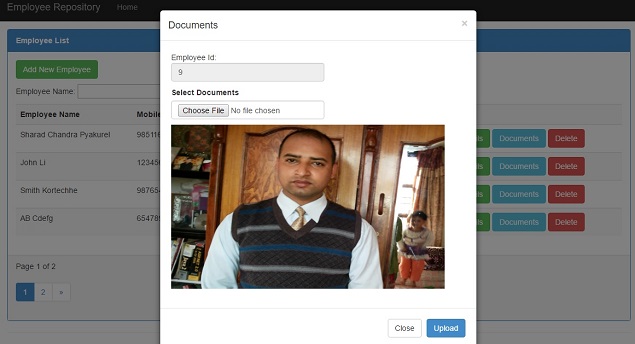

Part 1 of this three-part series explores the background, motivation, and architectural approach to developing a no-framework application. Part 2 presents the implementation of a no-framework application. Part 3 rebuts the arguments made against no-framework application development.

Rebuttals

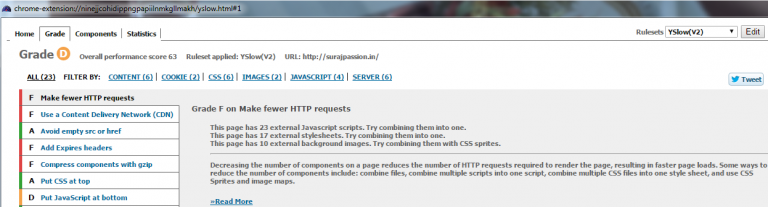

Armed with a working application, we can better refute the arguments made against implementing a no- framework solution which is the inspiration for this article. While it is true that frameworks perform much of the heavy lifting, usually there are more associated costs. There is an increased cost to a developer’s learning curve, additional proprietary extensions, reduced transparency, vendor lock-in, and even worse, version lock-in. Relinquishing control of the programming model or breaking the architecture to fit a proprietary framework are both costly in the long run.

Data Binding

The argument against direct DOM manipulation is a matter of governance. Data binding often uses a proprietary layer to perform the DOM manipulation, but the code is typically opaque to developers. Ideally, designers can change the presentation without affecting the view code. But in reality, a designer’s knowledge is limited to valid HTML. They do not insert the declarative binding extensions, so developers still have to add the data binding extensions to the HTML.

Through the use of data binding in MVVM, there is enforcement whereby only the view can manipulate the DOM. MVP is “leakier” and it requires stricter guidelines, discipline, and code reviews to enforce. But this is not a technical issue. This is a process and governance issue. Our preference is for higher governance to offer transparency and easier debugging, over opaqueness and having proprietary extensions in our HTML. Yet through higher governance, we retain the separation between the spheres of MV* responsibility.

Credit: Chris Solutions